Hewlett Packard Enterprise Accelerates AI Training with New Turnkey Solution Powered by NVIDIA

13 Novembro 2023 - 11:00AM

Business Wire

New solution for research centers and large enterprises to

accelerate generative AI, integrates industry-leading AI/ML

software, hardware, networking, and services

IN THIS ARTICLE

- Supercomputing solution for generative AI is purpose-built to

streamline the model development process with an AI/ML software

stack that helps customers accelerate generative AI and deep

learning projects, including LLMs and deep learning recommendation

models

- HPE introduces an AI training solution that is preconfigured

and pretested with AI/ML software, industry-leading supercomputing,

accelerated compute, networking, storage, and services — the first

system to feature the quad NVIDIA Grace Hopper GH200 Superchip

configuration

- Delivered with services for installation and set-up, this

turnkey solution is designed for use in AI research centers and

large enterprises to realize improved time-to-value and speed up

training by 2-3X

Hewlett Packard Enterprise (NYSE: HPE) today announced a

supercomputing solution for generative AI designed for large

enterprises, research institutions, and government organizations to

accelerate the training and tuning of artificial intelligence (AI)

models using private data sets. This solution comprises a software

suite enabling customers to train and tune models and develop AI

applications. The solution also includes liquid-cooled

supercomputers, accelerated compute, networking, storage, and

services to help organizations unlock AI value faster.

“The world’s leading companies and research centers are training

and tuning AI models to drive innovation and unlock breakthroughs

in research, but to do so effectively and efficiently, they need

purpose-built solutions,” said Justin Hotard, executive vice

president and general manager, HPC, AI & Labs at Hewlett

Packard Enterprise. “To support generative AI, organizations need

to leverage solutions that are sustainable and deliver the

dedicated performance and scale of a supercomputer to support AI

model training. We are thrilled to expand our collaboration with

NVIDIA to offer a turnkey AI-native solution that will help our

customers significantly accelerate AI model training and

outcomes.”

Software tools to build AI applications, customize pre-built

models, and develop and modify code are key components of this

supercomputing solution for generative AI. The software is

integrated with HPE Cray supercomputing technology that is based on

the same powerful architecture used in the world’s fastest

supercomputer and powered by NVIDIA Grace Hopper GH200 Superchips.

Together, this solution offers organizations the unprecedented

scale and performance required for big AI workloads, such as large

language model (LLM) and deep learning recommendation model (DLRM)

training. Using HPE Machine Learning Development Environment on

this system, the open source 70 billion-parameter Llama 2 model was

fine-tuned in less than 3 minutesi, translating directly to faster

time-to-value for customers. The advanced supercomputing

capabilities of HPE, supported by NVIDIA technology, improve system

performance by 2-3Xii.

“Generative AI is transforming every industrial and scientific

endeavor,” said Ian Buck, vice president of Hyperscale and HPC at

NVIDIA. “NVIDIA’s collaboration with HPE on this turnkey AI

training and simulation solution, powered by NVIDIA GH200 Grace

Hopper Superchips, will provide customers with the performance

needed to achieve breakthroughs in their generative AI

initiatives.”

A powerful, integrated AI solution

The supercomputing solution for generative AI is a

purpose-built, integrated, AI-native offering that includes the

following end-to-end technologies and services:

- AI/ML acceleration software – A suite of three software

tools will help customers train and tune AI models and create their

own AI applications.

- HPE Machine Learning Development

Environment is a machine learning (ML) software platform

that enables customers to develop and deploy AI models faster by

integrating with popular ML frameworks and simplifying data

preparation.

- NVIDIA AI Enterprise accelerates

organizations to leading-edge AI with security, stability,

manageability, and support. It offers extensive frameworks,

pretrained models, and tools that streamline the development and

deployment of production AI.

- HPE Cray Programming Environment

suite offers programmers a complete set of tools for developing,

porting, debugging and refining code.

- Designed for scale – Based on the HPE Cray EX2500, an

exascale-class system, and featuring industry-leading NVIDIA GH200

Grace Hopper Superchips, the solution can scale up to thousands of

graphics processing units (GPUs) with an ability to dedicate the

full capacity of nodes to support a single, AI workload for faster

time-to-value. The system is the first to feature the quad GH200

Superchip node configuration.

- A network for real-time AI – HPE Slingshot Interconnect

offers an open, Ethernet-based high performance network designed to

support exascale-class workloads. Based on HPE Cray technology,

this tunable interconnection supercharges performance for the

entire system by enabling extremely high speed networking.

- Turnkey simplicity – The solution is complemented by HPE

Complete Care Services which provides global specialists for

set-up, installation and full lifecycle support to simplify AI

adoption.

The future of supercomputing and AI will be more

sustainable

By 2028, it is estimated that the growth of AI workloads will

require about 20 gigawatts of power within data centersiii.

Customers will require solutions that deliver a new level of energy

efficiency to minimize the impact of their carbon footprint.

Energy efficiency is core to HPE’s computing initiatives which

deliver solutions with liquid-cooling capabilities that can drive

up to 20% performance improvement per kilowatt over air-cooled

solutions and consume 15% less poweriv.

Today, HPE delivers the majority of the world’s top 10 most

efficient supercomputers using direct liquid cooling (DLC) which is

featured in the supercomputing solution for generative AI to

efficiently cool systems while lowering energy consumption for

compute-intensive applications.

HPE is uniquely positioned to help organizations unleash the

most powerful compute technology to drive their AI goals forward

while helping reduce their energy usage.

Availability

The supercomputing solution for generative AI will be generally

available in December through HPE in more than 30 countries.

Additional Resources

- HPE expands portfolio of HPE Cray Supercomputing solutions for

AI and HPC

- NVIDIA GH200 Grace Hopper Superchip architecture

whitepaper

About Hewlett Packard Enterprise

Hewlett Packard Enterprise (NYSE: HPE) is the global

edge-to-cloud company that helps organizations accelerate outcomes

by unlocking value from all of their data, everywhere. Built on

decades of reimagining the future and innovating to advance the way

people live and work, HPE delivers unique, open and intelligent

technology solutions as a service. With offerings spanning Cloud

Services, Compute, High Performance Computing & AI, Intelligent

Edge, Software, and Storage, HPE provides a consistent experience

across all clouds and edges, helping customers develop new business

models, engage in new ways, and increase operational performance.

For more information, visit: www.hpe.com

i Using 32 HPE Cray EX 2500 nodes with 128 NVIDIA H100 GPUs at

97% scaling efficiency, a 70 billion-parameter Llama 2 model was

fine-tuned in internal tests on a 10 million token corpus in less

than 3 minutes. Model tuning code and training parameters were not

optimized between scaling runs. ii Standard AI benchmarks, BERT and

Mask R-CNN, using an out-of-box, non-tuned system comprising the

HPE Cray EX2500 Supercomputer using an HPE Cray EX254n accelerator

blade with four NVIDIA GH200 Grace Hopper Superchips. The

independently-run tests showed 2-3X performance improvement as

compared to MLPerf 3.0 published results for an A100-based system

comprising two AMD EPYC 7763 processors and four NVIDIA A100 GPUs

with NVLINK interconnects. iii Avelar, Victor; Donovan, Patrick;

Lin, Paul; Torell, Wendy; and Torres Arango, Maria A., The AI

disruption: Challenges and guidance for data center design (White

paper 110), Schneider Electric:

https://download.schneider-electric.com/files?p_Doc_Ref=SPD_WP110_EN&p_enDocType=White+Paper&p_File_Name=WP110_V1.1_EN.pdf

iv Based on estimates from internal performance testing conducted

by HPE in April 2023 that compares an air-cooled HPE Cray XD2000

with the same system using direct liquid cooling. Using a benchmark

of SPEChpc™2021, small, MPI + OpenMP, 64 ranks, 14 threads

estimated results per server, the air-cooled system recorded 6.61

performance per kW and the DLC system recorded 7.98 performance per

kW, representing a 20.7% difference. The same benchmark recorded

results of 4539 watts for the air-cooled system’s chassis power and

the DLC system recorded 3862 watts, representing a 14.9%

difference.

View source

version on businesswire.com: https://www.businesswire.com/news/home/20231113129459/en/

Cristina Thai cristina.thai@hpe.com

Hewlett Packard Enterprise (NYSE:HPE)

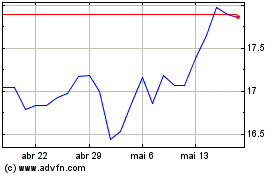

Gráfico Histórico do Ativo

De Abr 2024 até Mai 2024

Hewlett Packard Enterprise (NYSE:HPE)

Gráfico Histórico do Ativo

De Mai 2023 até Mai 2024